An offline-first self-hosted commute helper that keeps your data with you

A smaller product idea with a much bigger systems footprint underneath it.

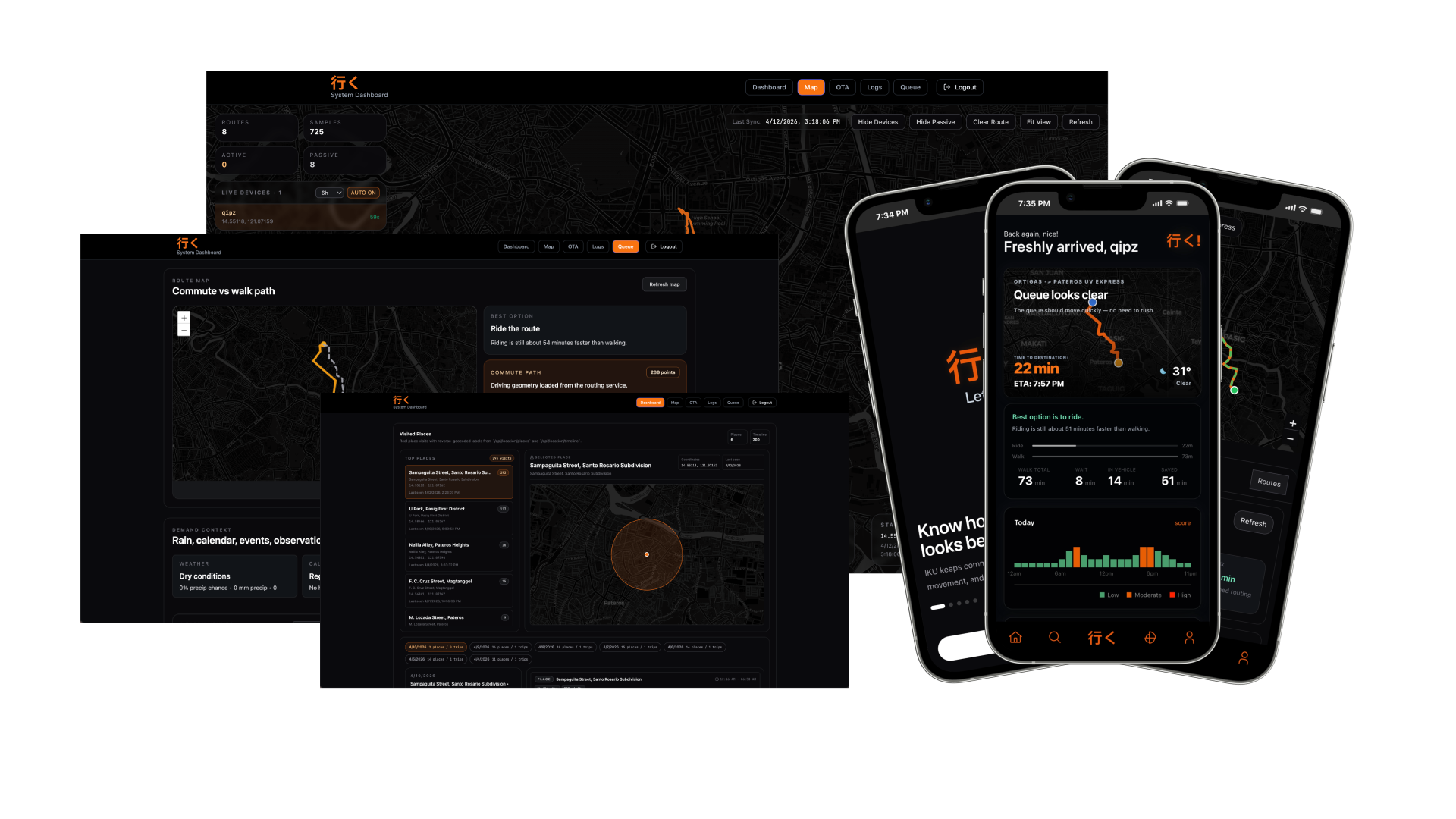

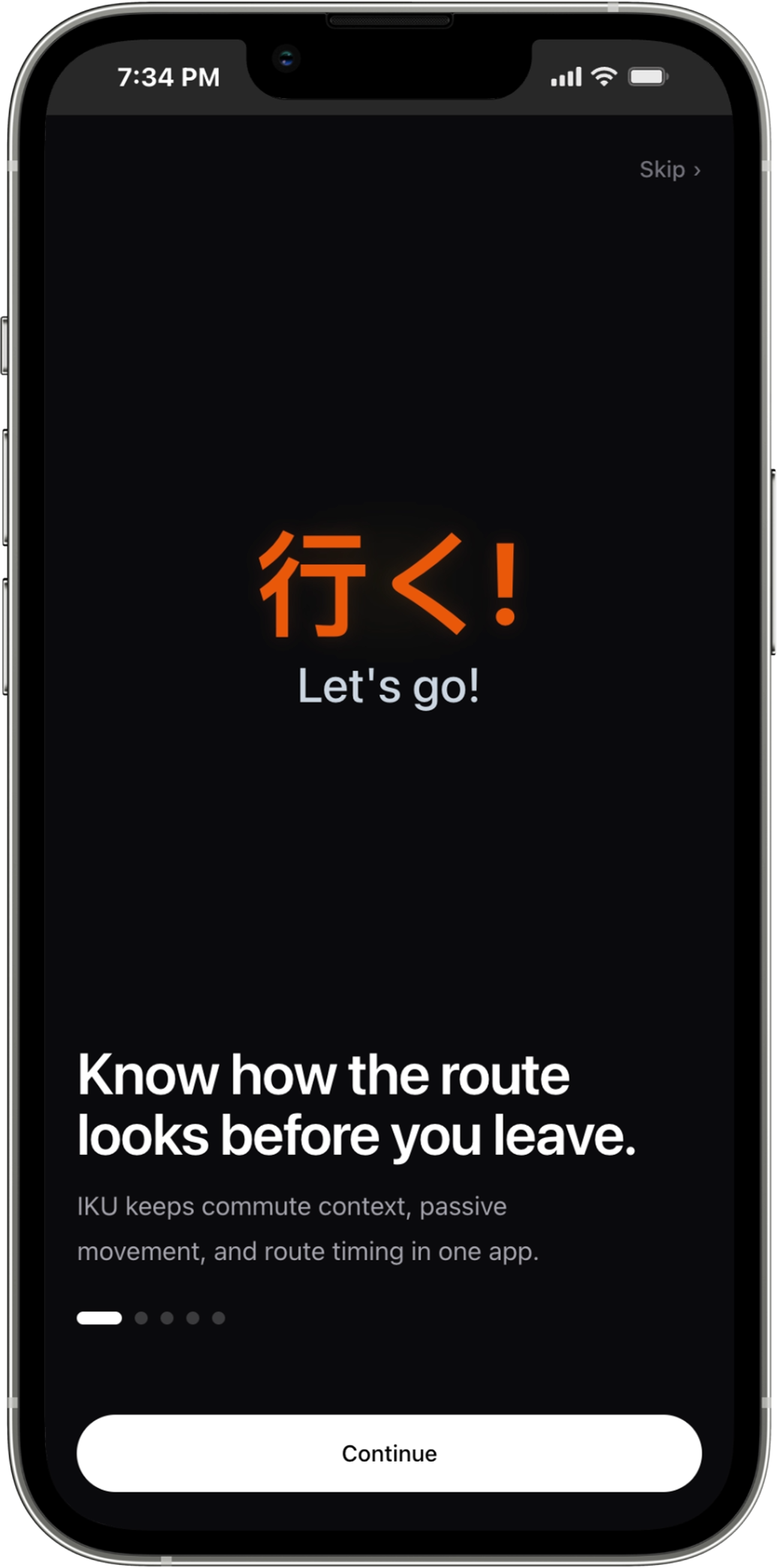

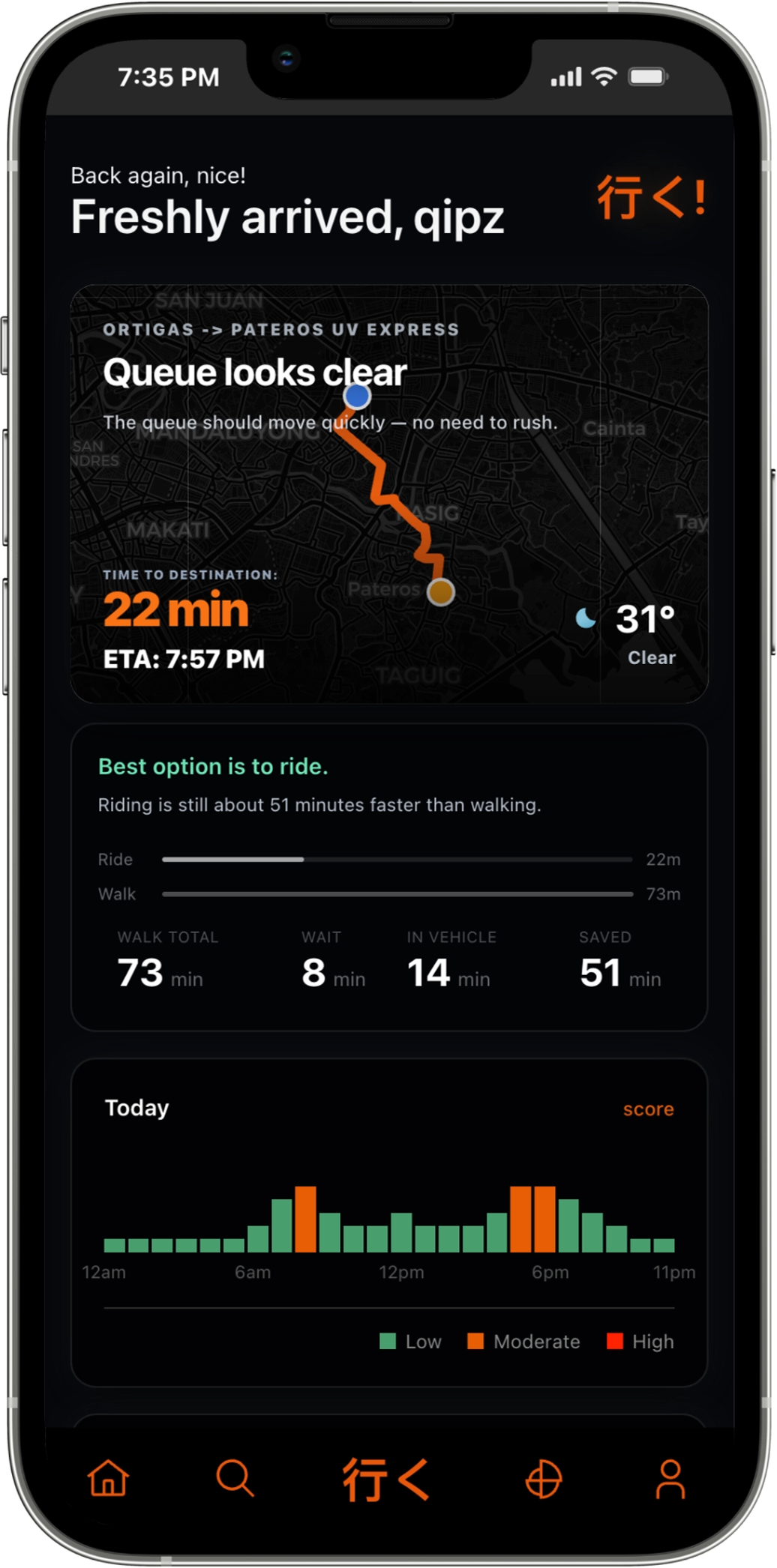

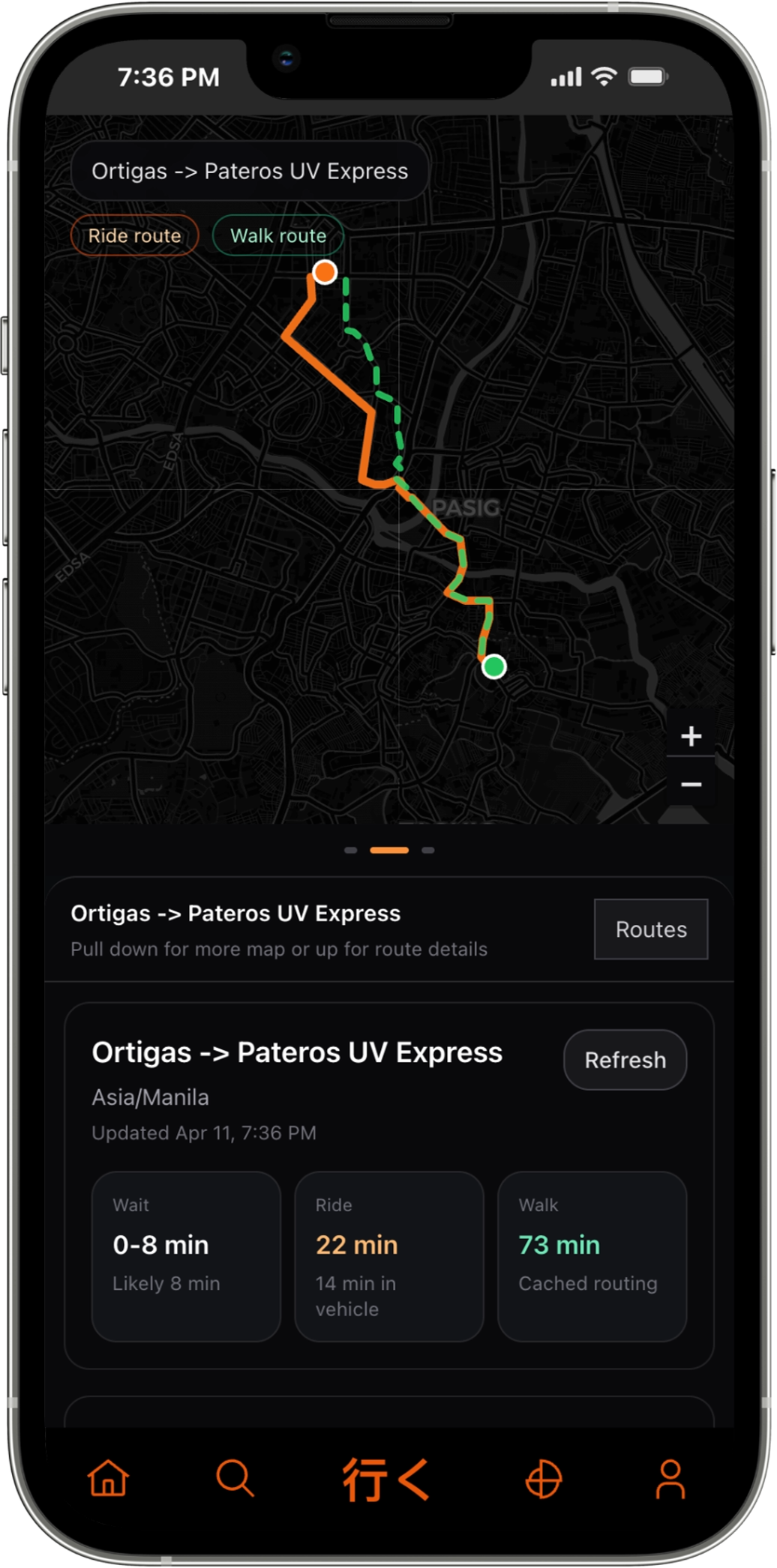

IKU is a commute helper shaped around a practical question: what is the fastest, most sensible way to move through a familiar route right now? Instead of acting like a generic map app, it compares queue conditions, ride time, and walking time through a product built for repeat daily use.

The project combines route planning, passive movement history, live queue-aware timing, and operational tooling in one system. The job was not just to make those pieces work independently, but to make them feel like one coherent product instead of a stack of experiments.

That meant owning more of the surface area directly: self-hosted routing, local storage before cloud sync, native tracking support, and an OTA delivery path for updates outside the usual app store cycle.

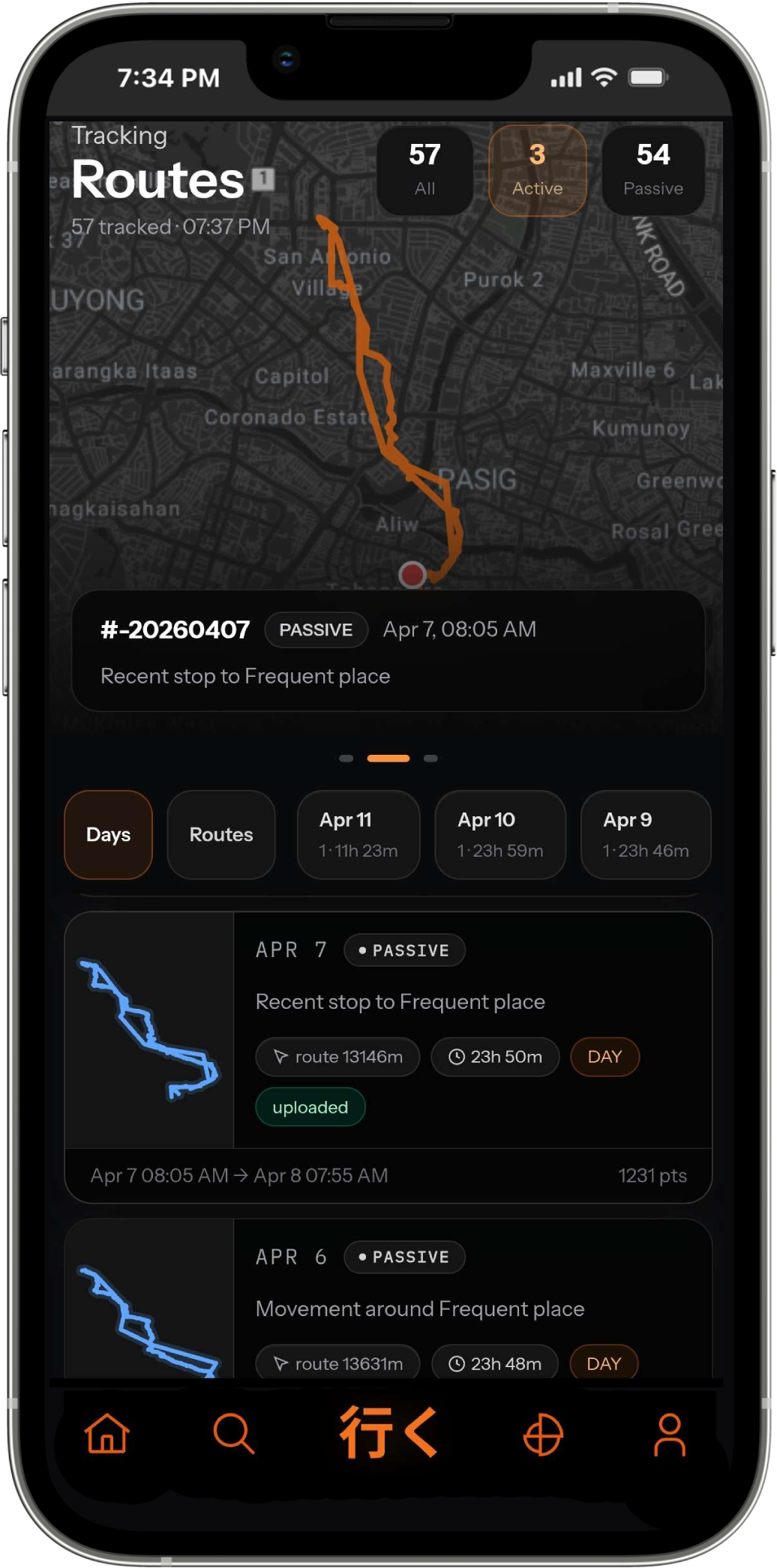

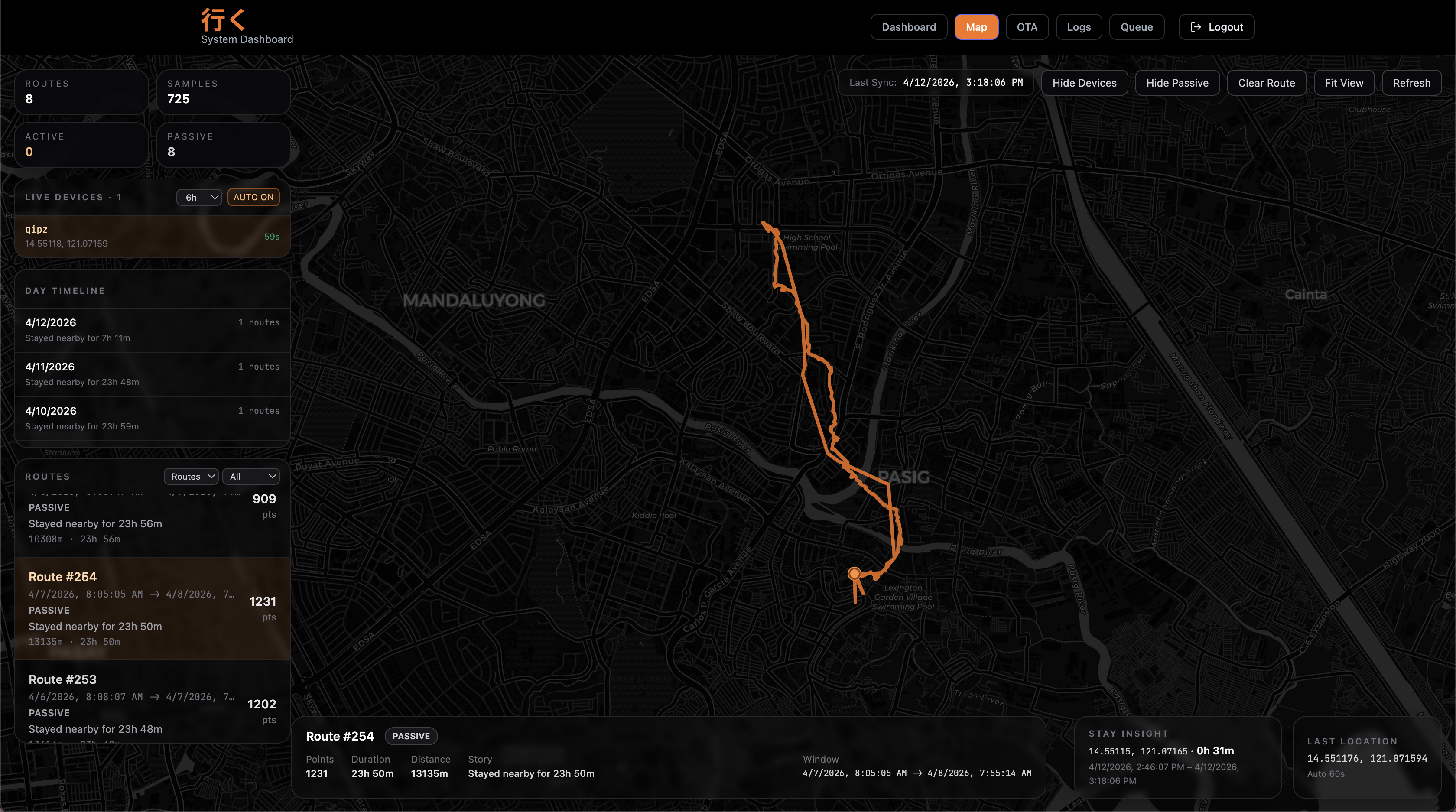

Location data is personal. I wanted a tracker I owned end to end — raw GPS, activity labels, routes, and the day view. So I built a Strava-meets-Life360 Android app with passive background tracking, activity recognition, stay-point detection, and a Google-Timeline-style day view, all synced through a Cloudflare Worker I wrote myself.

- Queue-aware commute guidance across ride and walking tradeoffs

- Passive movement history turned into a readable daily timeline

- Offline-first local storage with sync when connectivity returns

- Self-hosted OSRM routing behind an app-controlled backend

- Admin tooling for routes, queue data, and OTA delivery operations

No third-party tracking SDK. No Mapbox account. Just GPS, a SQLite queue, and a /sync endpoint. The OTA pipeline that ships the app is its own compact system — Worker API, D1 history, KV bundles, and a /check endpoint. Read how I implement the OTA here →

Three screens do the work: pick your route, read the conditions, review your day. Nothing in the way.

Offline-first data architecture

IKU is built around the assumption that connectivity is unreliable. Every write starts on-device — the native SQLite plugin queues payloads locally before anything touches the network. From there, Dexie DB acts as the reactive frontend store, keeping the UI snappy and hydrated from local state alone.

When connectivity is available, the sync boundary kicks in. The plugin replays its queue through the Cloudflare Worker, which validates and orchestrates the upstream writes. Structured app records land in D1.

The Worker also handles the reverse flow. When a new device is set up, it fetches the user's canonical state from D1 and hydrates the local stores, so the device is fully caught up before the user ever goes offline again.

The result: the app is fully usable offline, syncs transparently in the background, and never blocks the user on a network call.

Native tracking engine

Activity recognition, smoothed for reality.

Google's raw ActivityRecognitionResult is noisy. The receiver builds a score histogram, then applies three layers of smoothing: a low-confidence hold, a short-hold to prevent thrashing within 12 seconds, and a driving confirmation that requires two consecutive high-confidence reads. IN_VEHICLE now correctly maps to DRIVING.

PassiveLocationDriftGuard. GPS fixes while STILL are notoriously noisy. Uses a two-confirmation spike detection scheme — a point must be confirmed by a second nearby reading before it's accepted. Implied speed over 35 m/s is rejected outright, and stale baselines older than 5 minutes are cleared so drift can't compound over time.

StayPointDetector. While the device is STILL, incoming GPS fixes accumulate into a running centroid. If a new fix drifts beyond 80 m of the centroid, the current stay is flushed as a StayVisit. Stays shorter than 3 minutes are discarded. Gaps longer than 10 minutes split the stay into two separate visits.

TripStatisticsStore.TripBuilder. On transition from STILL to any moving activity, a TripBuilder starts accumulating GPS fixes — distance, mode-time histogram, and reservoir-sampled waypoints (max 20). On STILL return, flushes a TripRecord with dominant mode, per-mode percentages, distance, and the waypoint polyline.

ActivityRecognitionWatchdog. A 5-minute AlarmManager alarm that checks whether the last activity event is older than 12 minutes. If so, triggerRecover re-registers with Google Play Services — handling the case where the OS killed the registration silently. On STILL, also fires a heartbeat sync if the last still-sync was more than 8 minutes ago.

A Google Timeline clone, built on Vue.

The timeline engine is client-side.

The admin map page lazy-loads Leaflet, then polls /api/location/fetchAll with a cursor-based paginator — up to 20 pages per refresh, each page 500 passive points. Once local, a chain of Vue computed properties turns raw GPS fixes into a day-by-day timeline with start/end place labels, mini Leaflet maps per segment, and stay duration stats. No server does any of this computation.

The first long stay becomes the home candidate. The second-longest stay more than 220 m away becomes the office candidate. Segments between stays become trips: "At Home for 7h 32m → Went to Office in 22m".

passiveDayTimeline → buildDayTripSegments → stay detection at 130 m / 20 min → home/office labeling → mini Leaflet maps per segment.

If stays can't resolve the pattern, a fallback time-distance chunker splits by 15-minute gaps or 800 m jumps.

Leaflet mini-maps use Map<id, element> refs — not v-for index refs — so reorders don't hand Leaflet the wrong container.

What it taught me.

GPS is lying to you when you're still. A phone sitting on a desk reports 10–30 m of drift every few minutes. Without PassiveLocationDriftGuard's two-confirmation spike detection, the stay-point detector would have constantly thought the device was moving. The fix: don't accept a point until a second reading confirms it's real.

Android kills background registrations silently. On some devices, the OS cancels your ActivityRecognition subscription without firing any callback. The only detection: a watchdog that checks how long since the last event. More than 12 minutes — re-register. This was responsible for several hours of missing tracking data.

Two upload paths means double deduplication. The native plugin and Dexie JS path can both submit the same physical location. The sampleHash (SHA-256(deviceId|timestamp|lat6|lng6)) approach lets the backend deduplicate silently — same hash is counted as dedupedCount and never written twice.

Cursor pagination for time-series needs both dimensions. Paginating by timestamp alone breaks when multiple rows share the same millisecond. The (timestamp, id) compound cursor — sent back from the server and checked in the next query — gives stable, gap-free pagination even when points arrive in bursts.

An OR condition in SQL can silently scan millions of rows.(account_key = ? OR device_id = ?) looked harmless until EXPLAIN QUERY PLAN revealed a USE TEMP B-TREE FOR ORDER BY and 11M rows read in 24 hours. The fix: branch in code — each path hits exactly one index, the temp B-TREE disappears, and reads dropped by over 90%.